#R

-

🔗30 Day Map Challenge (Points): AIM Total Foliar Cover

I’ve been a bit busy, so I’m just getting to my first map challenge of the month lines. For it, I’m using my favorite dataset, the Bureau of Land Management’s, Assessment, Inventory, and Monitoring dataset. Here is total foliar cover for every point completed in the Western Lower 48 states. You can find the data set here.

-

🔗Mapping Plants for the BLM

A few years ago the Bureau of Land Management office I worked for was part of a project to map vegetation in Sage-grouse habitat. To do that we got 10 cm resolution aerial imagery across the species range. The following is a summary of the project, what I learned, what I would do differently, and why the project ended without ever really contributing to any on the ground management.

-

🔗Will There be a Raftable Release out of McPhee Dam in 2024

I have been less enthusiastic about predicting if the Dolores will have a raftable release this year due to an average snowpack. In January there was a potential for a release when the model was predicting 20 days. But other than that the model has been fairly pessimistic. In the last couple of days the model has had some really high predictions, above 40 days. I think either the model thinks that we are close to having enough snow to have a release or there was some sort of weird reading at the snotel sites. Either way, I would say that there is a chance the Dolores runs this year, but I'd guess it is unlikely.

-

🔗Getting Started With RGEE

I need to get some data hosted through Google Earth Engine. I’ve played around with the javacript and python libraries, but at heart I’m an R developer and would prefer to use earth engine in R if I can.

-

🔗Just Do Statistical Analysis!

I’ve long wanted to get better at statistics. And largely I have. But I still have a long way to go. I feel like I’m the best at machine learning type stuff, mostly using decision trees. The predictions seem to be the best. But I’m not really in to prediction as much as I am into learning about the relationships that exist in the world. A lot of machine learning is a black box.

-

🔗Using Rmarkdown with Eleventy

I write a lot of R in my life. I’m also a web developer that does most of my writing on this blog in markdown. Up until now these two worlds have been separate. I know and use Rmarkdown at work to write reports but, it always has seemed like a pain to get Rmarkdown to work on this site which is generated using 11ty (Eleventy). Up until now, to get my R work on this site, I have been writing R in another document and manually copying over code and outputs to markdown files. Given how inefficient that process is, I figured it was time to figure out how to write Rmarkdown and render it more seamlessly with this site.

-

🔗Predicting if the Dolores River Will Have a Raftable Release V2 - Summary

This post includes just the running prediction of if the Dolores River will have a raftable release below McPhee Dam this winter. This is a post with just the results from a much longer post I put up a few days ago. This prediction is an update to the model I made in the previous post. The only thing I updated is I didn't know that you could run XGboost regression as count/Poisson, which looks to be much more accurate (which makes sense).

-

🔗A few helpers for working with the {targets} package in R.

I started to use the

{targets}package for some of my larger projects. It was a little challenging for me to wrap my head around but after working through some initial problems I think it will help me stay organized and write code in a more composable way. Below are a few notes that I needed to keep coming back to when I was getting started. If you need a comprehensive{targets}getting started I suggest the targets book linked above or in the References section below. -

🔗R - Fatal Error: Unable to open the base package

On my Windows work computer I was having a weird problem where R would break after I restarted my computer. The basics of the problem were: I installed a new version of R, version 4.2.2 to be exact, R would work until I would restart my computer. After that when I started R it would crash I would get an error

Fatal Error: Unable to open base package. For weeks I googled around and most of what I could find had to do Rstudio not finding the right version of R. Based on what I found I deleted all of my .Rprofile and .Renviron files that I could find. I deleted all the old versions of R on my machine. Nothing worked. I reinstalled R version 4.2.2 like 5 times. Still the same problem. I even installed R on an external hard drive because I thought that my work proxy was doing something weird. -

🔗Predicting if the Dolores River Will Have a Raftable Release V2

Will there be a raftable release below McPhee Dam this year? I hope so. In this post I'll use the

{tidymodels}R package to predict the number of raftable release days with Xgboost. This post is an update to an earlier post. I take a slightly different approach to build this model and I think the model is more accurate than the last model I built. -

🔗Download all Ecological Site Descriptions for a MLRA from EDIT

I recently needed to download Ecological Site Descriptions (ESD) for a large part of the area I work in. The NRCS, Jornada Experimental Range and New Mexico State University have a handy website, EDIT that they provide all ESDs by Major Land Resource Areas (MLRA), wrapped in a nice user interface. In the past I've just used the site to view and download the ESDs as I needed them. But today I noticed that the EDIT website added a Developer Resources section page. It even has examples in R. I figured it was time to get to know the EDIT API.

-

🔗Tidy Tuesday: Week 2 Bees

A post about bee colonies. I wanted make a plot for each state and have each plot be plotted to the x y location of that state on a map. I used the package

geo_facet. -

🔗Helpful Rmarkdown Tips

I've been using Rmarkdown to write reports at work. Whenever I start a project I end up having to look stuff up that I've looked up a million times before. The setup ends up taking so much time and brain power that I end up spending half a day on it. So I figured I'd write it up so I don't have to google my face off to get up and running.

-

🔗How to pass a column name as a function parameter to a dplyr function in R.

If you need to pass a column name as a function parameter to a dplyr function/verb such as

filter()ormutate()do this: -

🔗Some AIM Data Visualizations from Work

I don't put a lot of what I do at work here. I try to keep work at work and a lot of what I post here is for fun. Lately though, I've been producing some visualizations from Assessment Inventory and Monitoring (AIM) data that I think are worth sharing.

-

🔗Gunnison sage-grouse Habitat Comparison

Similar to my post last week, we are again looking at another AIM data visualization. The last visualization was created from the raw AIM data, pulled from ArcGIS online. This visualization is pulled from terradat, AIMs online data repository for QA/QCed data, and looks at cover over three populations of Gunnison sage-grouse in Colorado, the San Miguel population, the Gunnison population and the Pinon Mesa population.

-

🔗VS Code: Add a Rmarkdown Code Chunk Snippet Key Binding

I recently started using VS code for R development. There is an awesome newish R extension for VS Code. It takes a little setup, it works best with the addition of radian, a python package, but otherwise it works really well. There are solid instructions on the extension page. I love Rstudio for the most part, but I got frusterated by the lack of editor customization (mostly line height). So here we are.

-

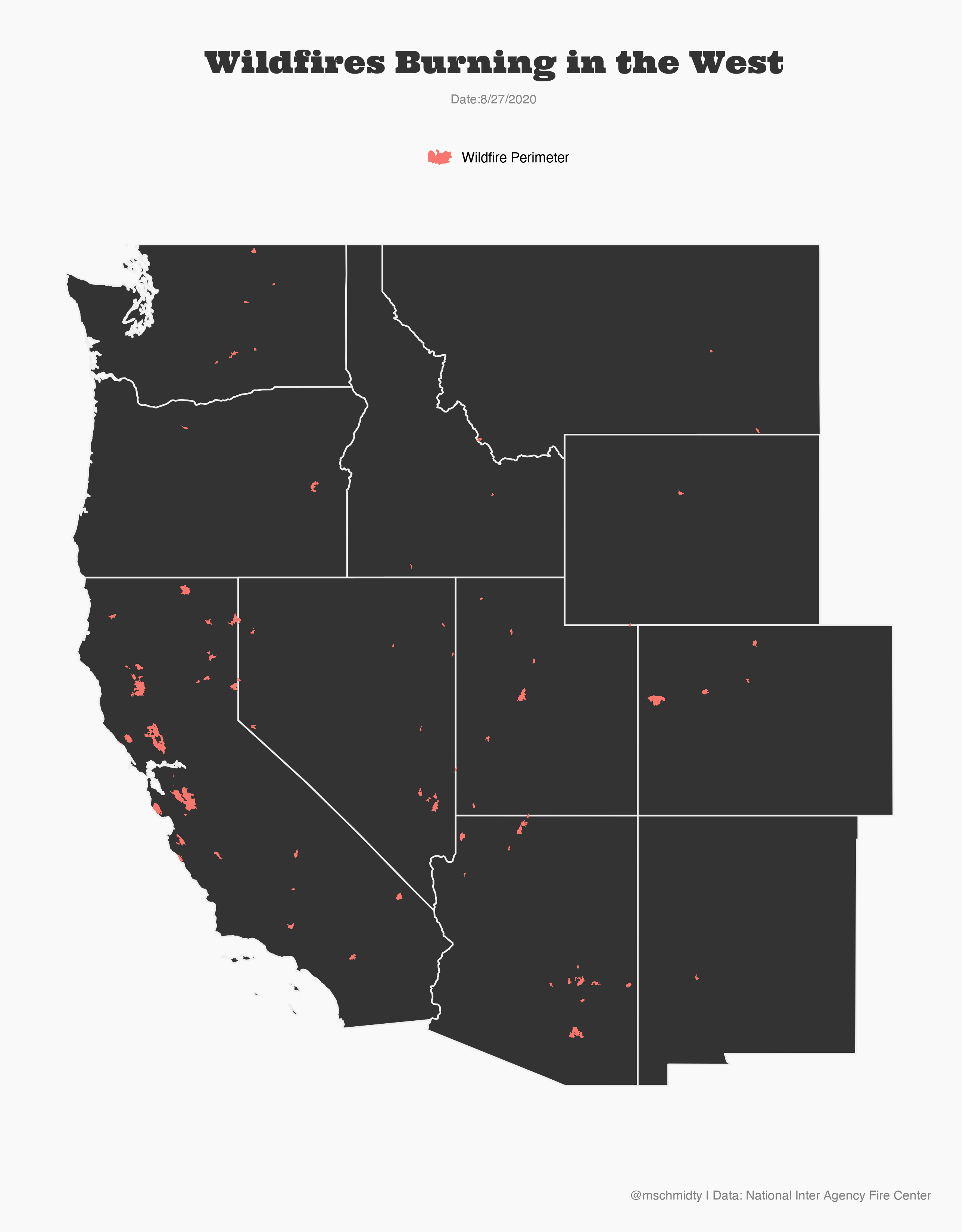

🔗Wildfires in the West

The second largest fire in Colorado's History is currently burning. California is also having a historic fire season.

-

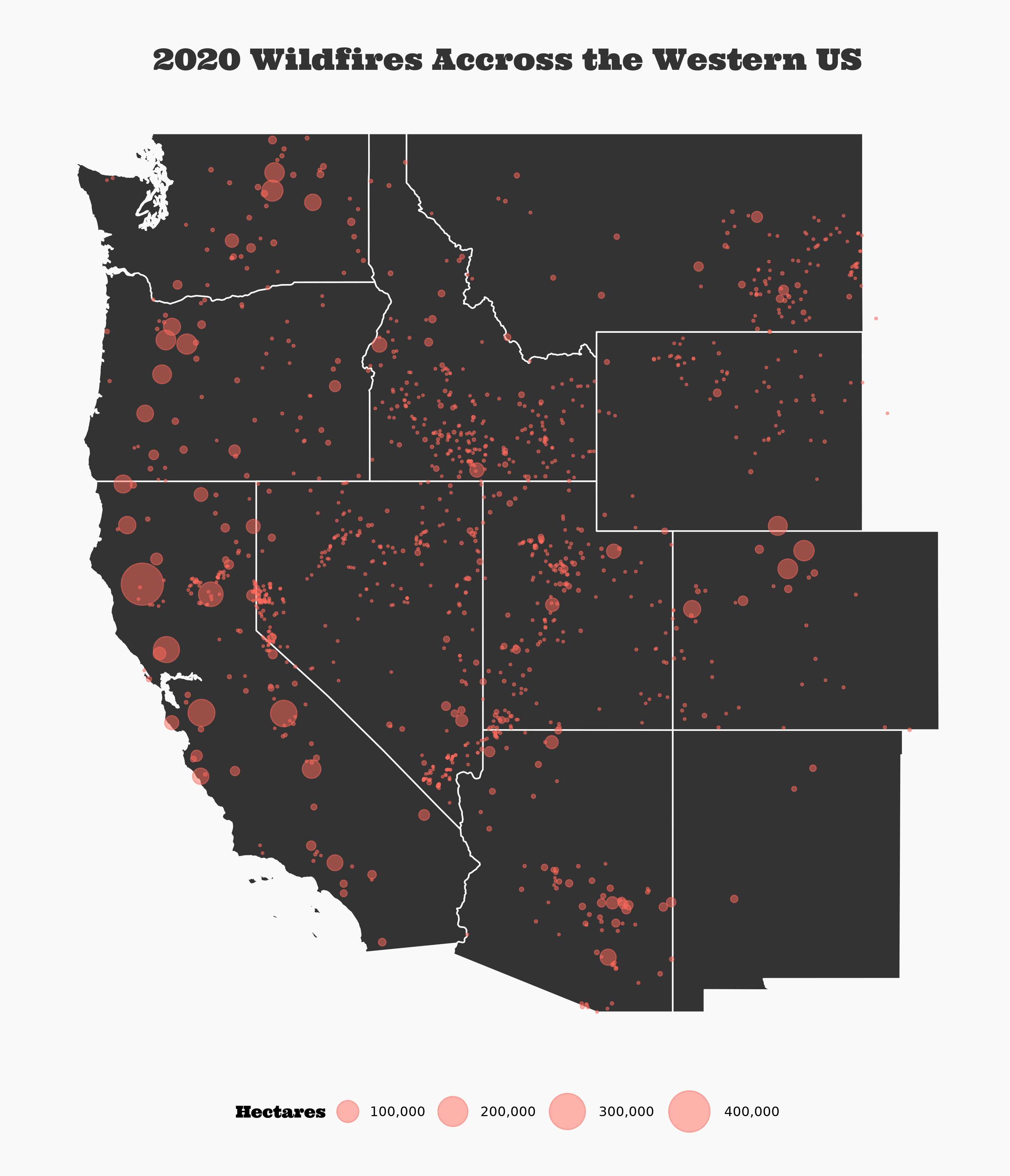

🔗#30daymapchallange Day 1 Points

I'm starting the #30daymapchallange Points prompt by continuing my obsession with the current fire season. Here are all the fires from 2020 mapped as points. What a year.

-

🔗#30daymapchallange Day Green

For the seventh day of the #30daymapchallange, I made a map using Open Street Map Data to map my old neighborhood. I feel lucky to have grown up near mountains in a city.

-

🔗First R Package

I've been trying to participate in #tidytuesday. While making plots I found myself consistantly repeating the same

theme()attributes for each plot. To solve this repetition, I decided to produce a package with my own theme. -

🔗TidyTuesday: Energy Usage in Europe

I haven't had a ton of time lately. On a recent road trip, I tried out a Tidy Tuesday submission on European evergy usage. Because I didn't have enough time, I wanted to make something simple and work on making it really easy to read. I think I did that. I would have liked to do a bit more with the theme. Maybe next time.

-

🔗Will There be a Raftable Release out of McPhee Reservoir

I live next to the Dolores River. It’s an often overlooked gem of the southwest. It runs from just outside Rico, Colorado at its headwaters to the Colorado River near Moab, Utah. Rafting it is an experience.

-

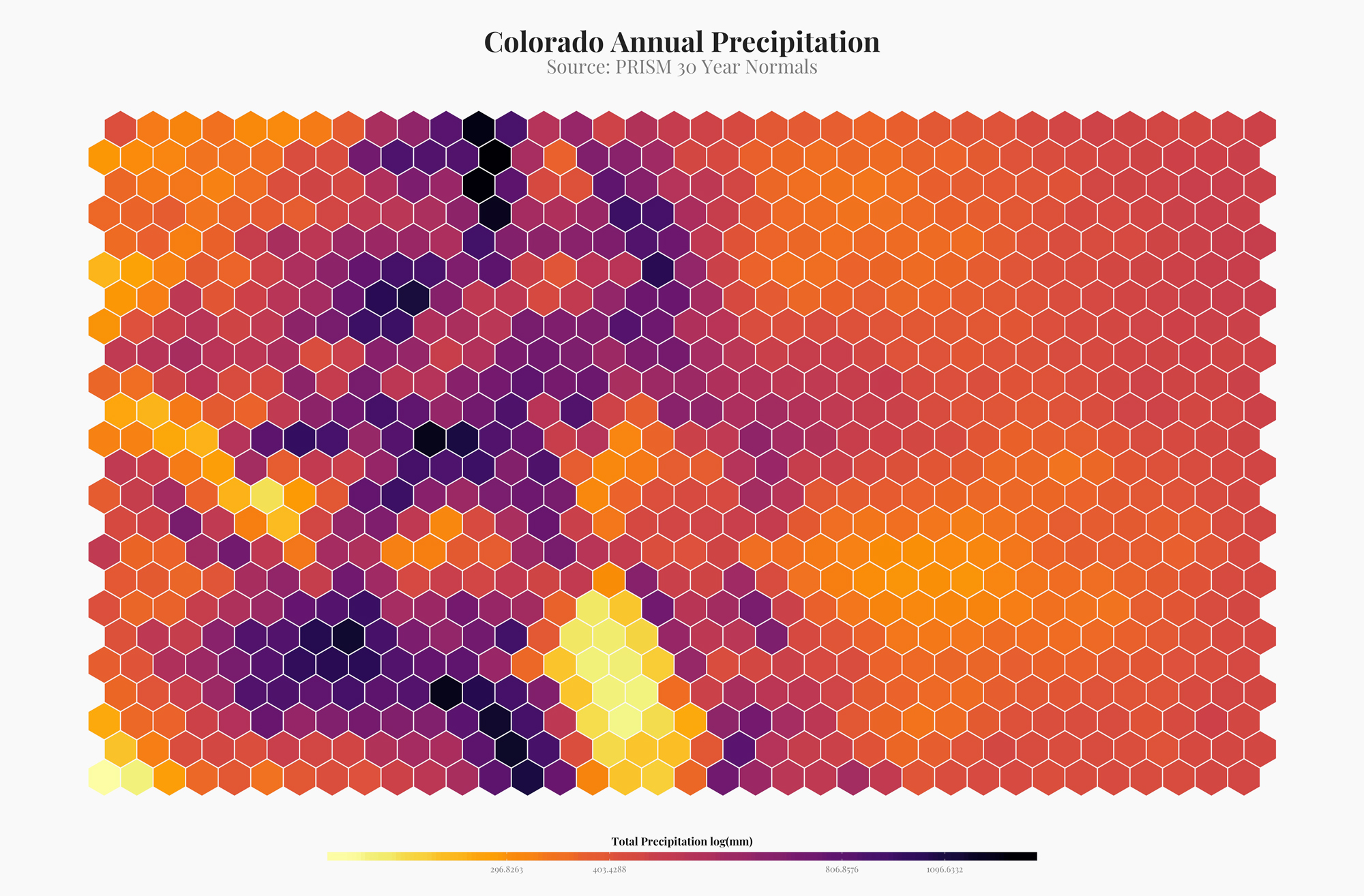

🔗Colorado: Hex Plots, API packages, and R

I've been seeing a lot of hex plots made with r. They are a way to semi-artistically visualize spatial data. Although I don't think this is completely appropriate for most visualizations, it looks amazing. Here are a few examples of how I made hex plots in R.

-

🔗Spatially Balanced Sample Designs in R with `spsurvey::grts()`

We've been using spatially balanced stratified study designs more frequently at work these days. They are a good way to make probabilistic inference over large areas. A popular method of creating these designs is using the R function

spsurvey::grts(). The following is a basic (very basic) explainer of how to get up and running withgrts()function and what it is. But a bit about GRTS and spatially balanced study design before we get coding. -

🔗Statistics in R: Resources for Understanding Statistics in R

This is a collections of resources that have helped me learn and understand statistics in R.

-

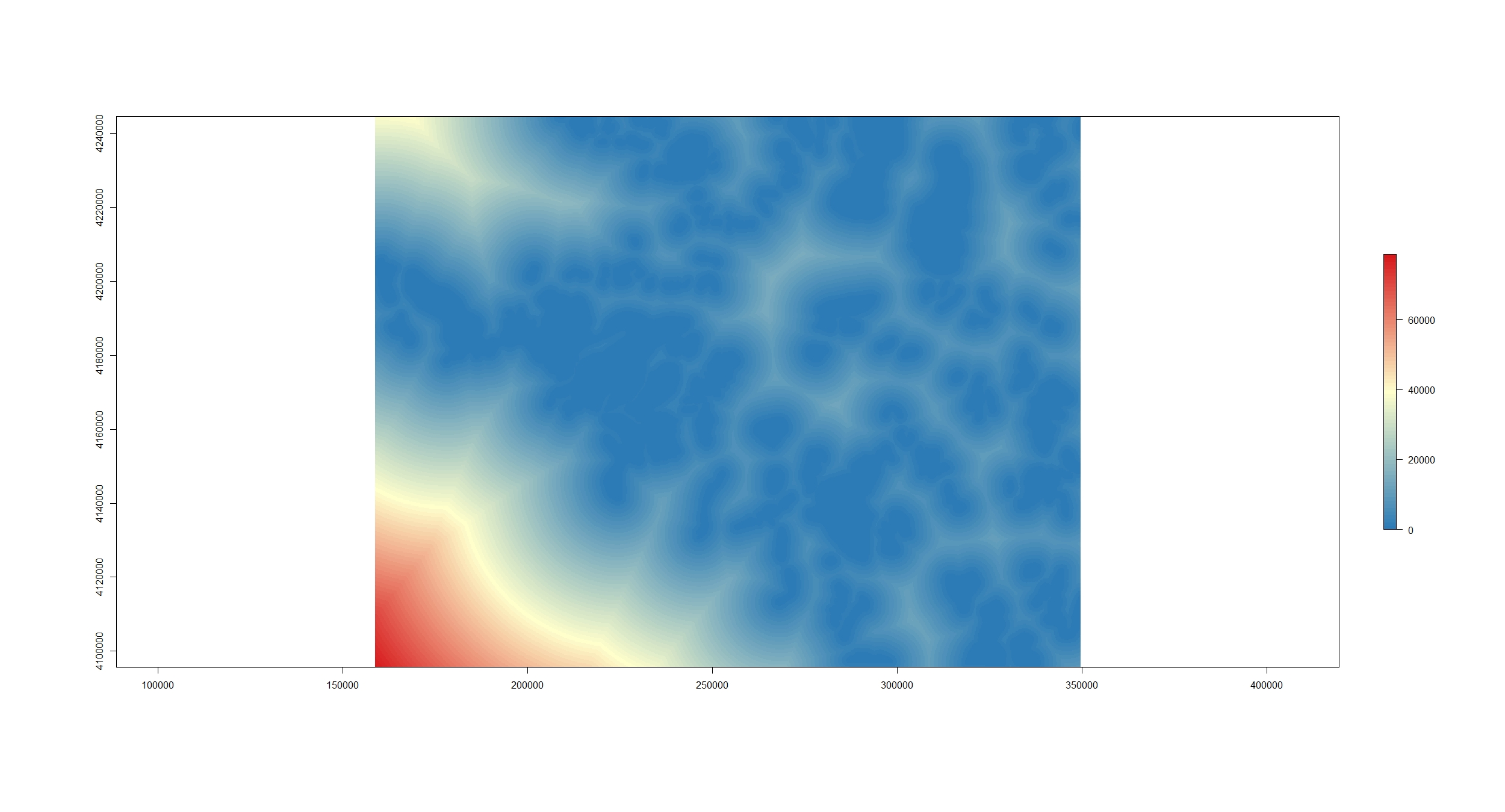

🔗Kernel Density Estimation in R

For a recent project I needed to run a kernel density estimation in R, turning GPS points into a raster of point densities. Below is how I accomplished that.

-

🔗Cumulative Distribution Function

Cumulative distribution functions allow you to answer the questions, what percent of my sample is less than or greater than a value. For example I work with sage-brush cover frequently. With a cumulative distribution function I can answer the question, what proportion of my plots with sagebrush have greater than 90% cover.

-

🔗Subset Raster Extent w/ R

A little snippet that helps subset raster extents.

-

🔗Extract Raster Values

Below is a method to use the raster package

extract()function to get a subet of rasterBrick values. To be specific, I need to extract all raster values that are within a polygon boundary. In the past I have usedcrop(),mask()and then thegetValues()functions from therasterpackage to subset data values within a polygon. But that method returns a data frame with a ton of NA values (anything outside of the crop area in the raster is an NA). This is fine most of the time but the current project that I am working on requires almost all of the memory on my computer. I'm working with extremely large rasters (2Gb). Removing the NA values after thecrop(),mask(), andgetValues()process crashes my computer. So I need a more effecient process. -

🔗Remote Sensing Tools

A collection of tools and documentation on remote sensing.

-

🔗Random Forest Resources and Notes

Resources to understand and run random forests in r.

-

🔗Creating a polygon from scratch in R

A quick little snippet for making a polygon with coordinates out of thin air in r.

-

🔗Raster Distance Calculations

There are many cases when I have needed to calculate a distance from a point or many points to a feature in GIS. Until recently I have always used the near tool. This works really well for small datasets but can take forever with larger datasets. The near calculation also only works with points (I'm sure that there is a raster equivalent I just haven't done the research on it). I also really like to document where I got my data. You can do that in ArcGIS but it is an added step to do it.

-

🔗Classifying High Resolution Aerial Imagery - Part 2

I have been attempting to use random forests to classify high resolution aerial imagery. Part one of this post series was my first attempt. The aerial imagery dataset that I am working on is made up of many ortho tiles that I need to classify into vegetation categories. The first attempt was to classify vegetation on one tile. This note documents classifying vegetation across tiles.

-

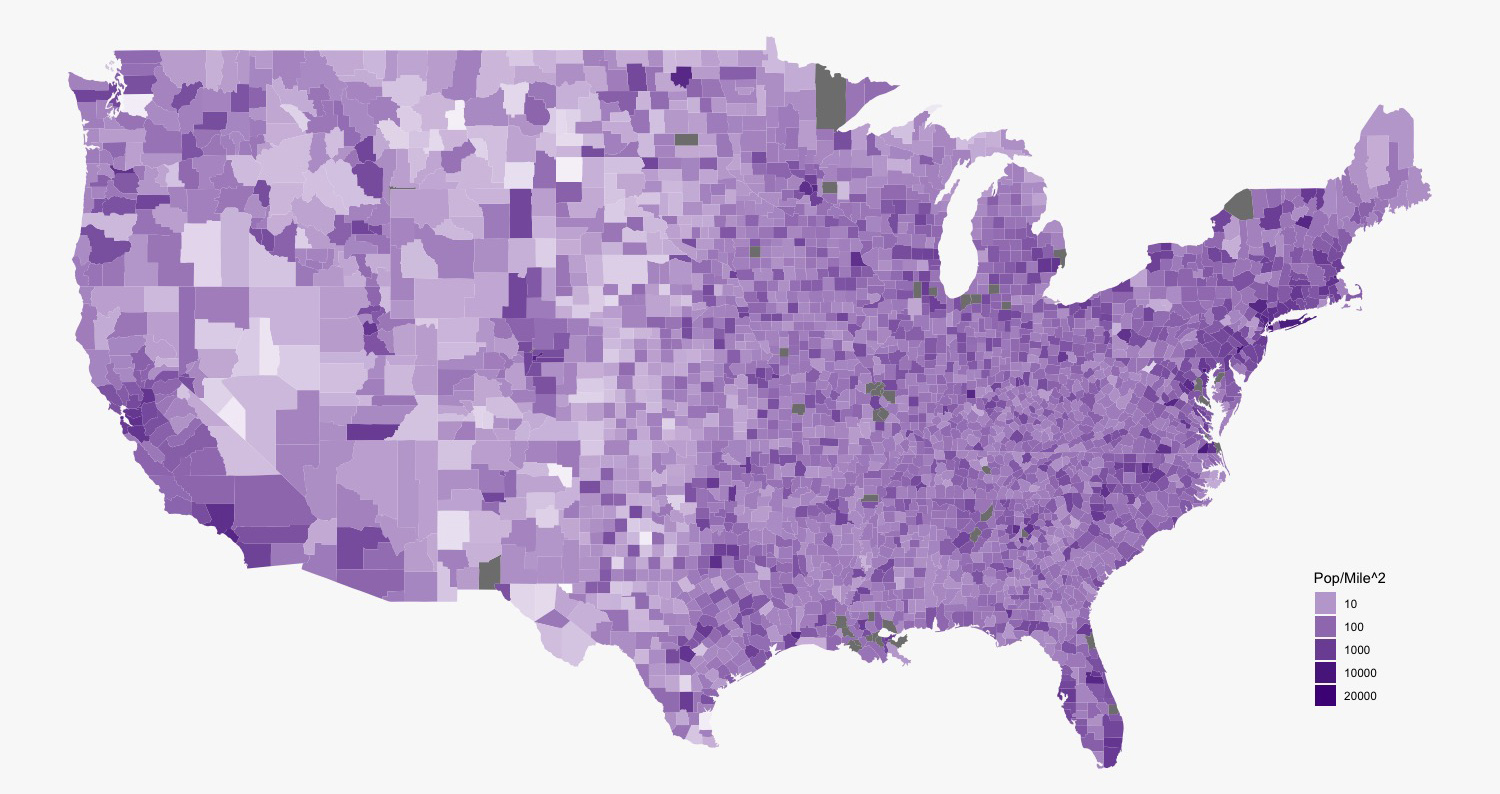

🔗Making a Chloropleth Map in R

Load the libraries.

-

🔗Classifying High Resolution Aerial Imagery - Part 1

The following note documents a proof of concept for classifying vegetation with 4 band 0.1m aerial imagery. We used sagebrush, bare ground, grass, and PJ for classes. approximately 300 training polygons were used as a training data.

-

🔗Data Science Resources

A collection of resources on data science and machine learning primarily in R.

-

🔗Colorado Avalanches By The Numbers in R

A look at avalanches in Colorado. Please not I'm not an avalanche expert, so please take these interpretations with a healthy dose of skepticism.

-

🔗Working with DIMA Tools and Making a Plant List from Species Richness Table

This is a series of notes that works with the DIMA database. The DIMA was produced by the Jornada Research Center for the Assessment Inventory and Monitoring framework.

-

🔗A Method for counting in a sequence, reset by a binary event in R

A method for creating a variable that sequential counts until an binary event occurs in another vairiable.

-

🔗How to download and work with LSAT data - a better approach

My last post was about working with the r

getlandsatpackage to work with landsat data from NASA and the USGS. This post will be a brief refinement on that process. -

🔗Working With Landsat8 Data from the USGS

We are trying to monitor drought in sage-grouse habitat. Because we don't have historic on the ground data collection, I thought that it would be good to try and do this with landsat data. Landsat data is collected by the USGS and NASA about once ever 16 days.

-

🔗Cultural Model R Scripts

The following are scripts that I used to make a cultural prediction model. It uses topographic, hydrologic and biological GIS information to predict areas where arc sites likely occur on the landscape.

-

🔗Landsat First Try

See all tags.